Challenges with Quality Vision Inspection Systems

Quality vision inspection systems play a vital role in the production of automotive engines where cast and machined frames are matched and assembled with various vendor-supplied components. Slight defects in materials or incorrectly installed parts can lead to the failure of the engine. Manual checks and validations are frequent throughout the assembly process, yet results are inherently inconsistent, and costs continue to escalate over time. In an effort to automate many of these quality inspections, machine vision has become a critical tool to detect defects verify assembly. The goal of machine vision integration has always been to emulate the capabilities of human vision and cognition, while incorporating the speed and repeatability of computer processing and analysis algorithms. However, with traditional machine vision techniques, creating versatile and reliable inspection has proven challenging, prohibitively costly, or in many cases programmatically impossible.

Rocker Arm Vision Inspection

A manufacturer of automotive engines turned to Automationnth to make its quality vision inspection systems more reliable. The customer wanted to replace an automated vision check for validating the presence and proper installation of intake and exhaust valve rocker arms. A previous attempt to automate this inspection with traditional vision techniques had been replaced by manual inspection because it was not dependable.

The Rocker Arm inspections verify that the appropriate rockers are correctly installed to the head valves before the engine block enters final assembly. Many factors complicate the programming of this automated vision task. For instance, there are multiple rocker arm styles that need to be checked (see Figure 2 for examples). Using traditional pattern locate and measurement techniques, each rocker style would likely require very different programming to analyze for presence and proper positioning. Also, surface areas often contain random oil or water spots, and installed components possess significant ranges of allowable orientation. These variable conditions make development of a trustworthy, automated vision solution difficult and ongoing support of the system time-consuming, costly, and uncertain. For the customer, frequent line interruptions from a 20%+ false-failure rate resulted in too much downtime to meet production goals. Maintenance engineers also struggled to support inspection algorithms and make updates for component changes and new product types.

Solution

Cognex, a global leader in machine vision, has a product called VisionPro ViDi that uses deep learning-based machine vision tools to tackle complex machine vision tasks. ViDi, via controlled training of labeled images, allows engineers to create repeatable inspections that tolerate erratic conditions and unpredictable defects.

The Cognex ViDi vision software mimics the visual learning and decision processes of the human brain. Hundreds of labeled images are presented to the neural net-based software to “train” the range of allowable component variance. Critically, this means that the system can make accurate good and bad part predictions even when it encounters conditions that were not presented during the training phase. As an added benefit, if a runtime decision is deemed inaccurate, corrections can be made without recoding software algorithms and resulting training updates can be rapidly redeployed back into production.

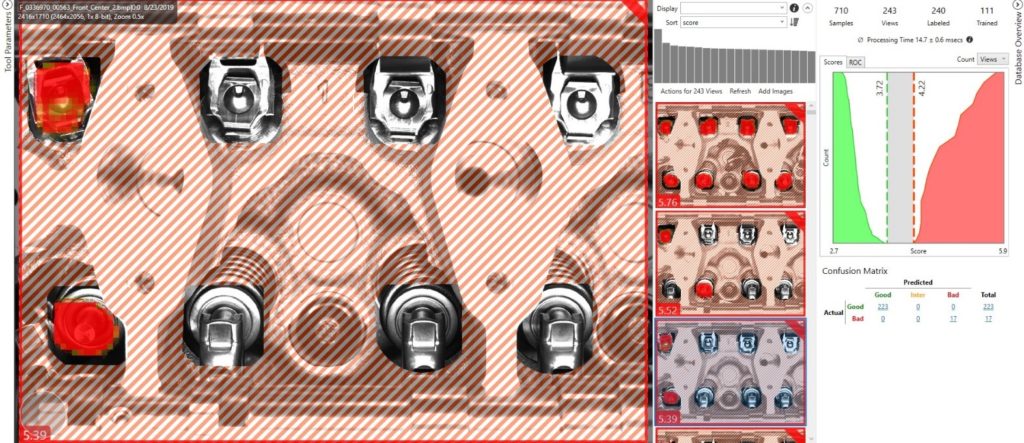

For the rocker arm quality vision inspection system, Automationnth designed a six-camera setup to simultaneously capture and process multiple views of the engine rocker arms: Front/Rear Center, Front/Rear Exhaust, and Front/Rear Intake. Separate, though structurally identical, ViDi workspaces for each of the six views were then designed and trained with runtime images from each respective view. Three different types of ViDi tools are utilized in these inspections: 1) a Blue Locate Tool is used to fixture the image to account for positional deviation of the engine pallet on the conveyor, 2) the Green Classify Tool subsequently identifies the engine type based on the visible components, and 3) the Red Analyze Tools then perform the final image analysis deciding if the rockers are present and properly installed. The Red Tools, in this case, utilize what is called the Unsupervised learning mode. Good images are used to train the spectrum of viable features of interest, and deviations score higher within the ViDi decision matrix.

Accumulated bad images are added to the training and processed to define a runtime threshold for the final good vs bad verdict. See the Confusion Matrix window in the example image below.

In the example below, a heatmap image is being displayed for a failed Red Tool rocker arm inspection. One rocker is not properly secured and fails for orientation, while another is altogether missing. For processing, much of the image is masked out, meaning that these regions are not analyzed. This increases processing speed for the high-resolution images. Total analysis time for a single image is approximately 50-80 milliseconds for all tools.

ViDi proved exceptionally capable of performing the required assessments, improving inspection accuracy, eliminating downtime interventions, and easing support burdens on maintenance.

Challenges & Lessons Learned

Though the implementation of the ViDi workspace was relatively straightforward, acquiring sufficient example images and applying “expert” labeling to hundreds of images was a time-consuming, iterative endeavor. Not only must numerous good images be trained for the system to learn the broad range of good cases, but bad images must also be obtained and analyzed to comprehend how ViDi will score various feature deviations. As a result, once the inspection system is installed, it can then take weeks to accumulate a representative set of training images necessary to build a robust inspection system. It is critical that an “expert” be involved in this process, as subjectivity can be inherent in labeling images, and ViDi is only as smart as it is consistently trained.

During training of the ViDi neural net, a large volume of sample images can be accumulated. Too many training images can result in a condition known as overtraining. Overtraining of the neural net can slow performance, negatively impact the system’s ability to characterize new image data, or in some cases, render the output scores meaningless. During development and initial testing of the Rocker Arm project, Automationnth engineers found that the training process was best accomplished iteratively. That is, initially, don’t throw a huge number of images into the workspace database for training. Start with a small, but representative, set of images. Then, during commissioning, identify difficult and mischaracterized cases for subsequent retraining. With each batch update, the inspection becomes smarter, and the potential pitfalls associated with over-training can be mitigated.

Even when managing the images used for training, ViDi workspaces can still grow to many Gigabytes, making tasks such as training, exporting, importing, and deployment consume significant amounts of the developer’s valuable time. Training large, high-resolution image sets can be unavoidably time consuming. GPU performance is critical here to minimize wait time, and therefore, it is recommended that ViDi developers invest in high-end GPUs to optimize training durations. On this project, the NTH team utilized the Nvidia GTX 1080, with training durations ranging from three to six minutes per tool. Lesser GPUs are not generally recommended, and any improvment in training performance will be beneficial over the life of the project.

Processing multiple engine types became another challenge that confronted the NTH team when architecting the ViDi workspace solution for the rocker inspection. Engines of different model types could be presented for inspection randomly, and the station’s cycle requirements afforded little excess time to facilitate a lengthy changeout of recipes. ViDi workspaces can be divided into Streams, which originally appeared to be a logical means of separating engine type inspection trainings. This worked well for logically organizing product inspections; however, the team discovered that switching streams during runtime was not a quick process within ViDi, sometimes taking 10 to 20 seconds or more to internally load ViDi runtime data. Unacceptable, an alternative workspace design was engineered, where the Green tool was integrated and trained to automatically classify and route the image to an appropriate Red Analyze Tool based on the engine’s part type. As a result, with image classification occurring in as little as five milliseconds, stream switching became unnecessary.

Quality Vision Inspection System Results

The ViDi system now achieves 98% to 99% accuracy in labeling inspected rocker arms. This allowed the customer to eliminate a manual inspection station from the line, improving quality and reducing long term production costs. Another key aspect of the project’s success was the simplicity of the ViDi implementation and the ability to hand off ownership of the ViDi retraining to the customer’s maintenance staff. The customer is no longer forced to endure significant downtime recoding vision algorithms to correct failed inspections. Mischaracterized inspections can be easily added to the ViDi workspaces, retrained offline, and rapidly redeployed into production with little unscheduled downtime. The system was recognized by the plant’s production engineering group for its innovation by solving a complex quality vision inspection system problem and enhancing quality control. As a leader in machine vision integration, Automationnth continues to embrace ViDi as a principal tool for solving some of the most challenging machine vision problems across a broad range of industries.